I have paid for software I never used. More than once. And if you have been running a small business for longer than a year there is a very good chance you have too.

Not because you are careless with money. Not because you chose randomly. You read the reviews, watched the demo, signed up for the free trial and genuinely believed this was the tool that would fix the problem. Then three weeks later it was just another tab you stopped opening and another line item on the credit card statement you stopped thinking about.

That pattern is more common than most founders admit and it almost never starts where people think it does.

The tool is rarely the problem

When a SaaS tool fails inside a small business the instinct is to blame the software. It was too complicated. The interface was not intuitive. The features did not match what was promised in the demo. Sometimes those things are true. But in the majority of cases the tool was not the failure point.

The failure point was the evaluation process that led to choosing it.

Most small business owners pick SaaS tools the way they pick restaurants when they are already hungry quickly, based on surface impressions and without nearly enough information about what they actually need. A recommendation from someone in a Facebook group. An ad that appeared at exactly the right moment. A Product Hunt feature that made the tool sound like it was built specifically for them.

None of those are bad starting points. The problem is that most founders go directly from discovery to sign-up without stopping to ask whether the tool actually fits how their business operates not how they want it to operate, but how it actually works today.

That gap between surface appeal and operational fit is where most SaaS tool failures begin.

What actually happens after the sign-up

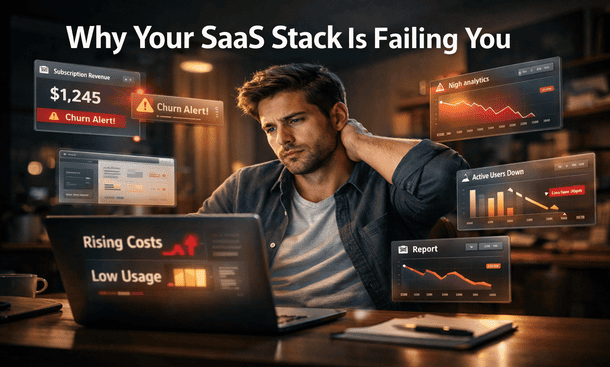

There is a predictable sequence that follows most SaaS tool adoptions in small businesses and it is worth naming clearly because recognizing the pattern is the first step toward breaking it.

Week one feels promising. The tool is new, the interface is interesting and the founder is motivated enough to spend time exploring features and setting things up. Progress feels real even when most of what is happening is configuration rather than actual work.

Week two is where the cracks appear. The setup is done but the daily habit of using the tool has not formed yet. Team members were told about the switch but were not involved in choosing it and do not feel ownership over the new system. The founder starts using it inconsistently because real deadlines do not pause for tool transitions.

Week three is quiet. Nobody says the tool is not working. They just stop bringing it up. Tasks migrate back to email. Updates happen in Slack. The tool sits open in a browser tab that never gets closed but also never gets used.

By week four the subscription is active and the tool is functionally abandoned. The founder makes a mental note to cancel it and then forgets. Six months later it is still on the credit card.

The three reasons SaaS tools fail small businesses specifically

Large companies fail at SaaS tool adoption too but they have resources to absorb the cost dedicated IT teams, formal onboarding processes, training budgets. Small businesses do not. When a tool fails to stick in a small business the cost is disproportionately high because everyone involved is already operating at capacity and nobody has bandwidth to recover from a failed implementation gracefully.

Three patterns show up consistently across small business SaaS failures and they are worth understanding in detail.

Choosing for features instead of workflow fit

The most common mistake. A founder sees a feature list that sounds impressive automations, integrations, advanced reporting, custom dashboards and interprets capability as value. But capability only becomes value when it connects to how work actually moves through the business.

A tool with twenty features your team will not use is not more valuable than a tool with five features your team uses every day. It is less valuable because it adds navigation complexity without adding operational benefit. And in a small business where team members are already stretched the additional cognitive load of a feature-heavy interface is not neutral it is friction that compounds until people stop using the tool entirely.

Skipping the integration audit

Every SaaS tool exists inside a stack. It has to communicate with the other tools your business depends on your email, your calendar, your CRM, your accounting software, your communication platform. When a new tool cannot connect cleanly to the rest of the stack something breaks at every handoff. Data has to be entered manually in multiple places. Information lives in two systems that do not agree. Team members develop workarounds that eventually become the actual workflow.

Most founders do not audit integrations before committing to a tool. They discover the gaps after the subscription is paid and the setup is done at which point switching feels expensive even when staying is more expensive.

Underestimating the adoption gap

A founder chooses a tool. The founder understands why the tool was chosen. The team does not because they were not part of the decision. They receive a message saying the team is switching to a new platform starting Monday and are expected to figure it out from there.

This is how most SaaS tool rollouts happen in small businesses and it is why most of them fail. Adoption is not a technical problem. It is a behavioral one. Getting a team to change how they work requires more than access credentials and a link to the onboarding documentation. It requires involvement before the decision is finalized and genuine buy in from the people whose daily habits need to change.

A tool your team was not consulted about is a tool your team will not prioritize learning. That is not resistance it is a rational response to feeling like change was done to them rather than with them.

The hidden cost nobody talks about

The subscription fee is the visible cost of a failed SaaS tool. It is also the smallest one.

The real cost is the time spent evaluating the tool before purchase the demos watched, the comparison articles read, the free trial explored. The time spent setting it up the workspace configured, the templates built, the team invited. The time spent trying to get adoption the Slack messages sent, the follow-up conversations had, the workarounds documented. And the time spent making the decision to move on the research into alternatives, the data migration, the process of starting the whole cycle again.

For a small business where every hour has a real dollar value attached to it those costs add up to something significantly larger than the monthly subscription fee that shows up on the statement.

A founder billing at $100 an hour who spends 15 hours on a failed tool evaluation and implementation cycle has absorbed $1,500 in cost before the first invoice from the software company arrives. If that cycle repeats twice a year which is conservative for early-stage businesses actively building their stack the real annual cost of poor SaaS selection is well into the thousands before a single feature gets used.

Why this keeps happening to smart founders

The SaaS industry is exceptionally good at one thing: making tools look simpler and more essential than they are during the evaluation phase. Demo environments are clean and pre-populated with perfect data. Review sites surface the strongest use cases and bury the implementation challenges. Free trials are designed to show capability during the window when motivation is highest before the reality of daily operational use sets in.

None of that is inherently dishonest. But it means that the information available during the evaluation phase systematically overstates how easy adoption will be and understates how much the tool needs to fit your specific workflow to deliver on its promise.

Smart founders fall into this trap not because they are not paying attention but because they are evaluating based on the information available and that information is shaped by companies with a financial interest in the purchase decision.

The antidote is not skepticism about every tool. It is a structured evaluation process that starts with the business’s actual workflow before any tool enters the conversation. That process the filters, the questions, the integration audit, the adoption assessment is what separates the small businesses that build functional tool stacks from the ones that keep cycling through subscriptions without building anything that sticks.

Understanding how to choose SaaS tools that actually fit the way your small business operates is the foundation that changes the outcome because the selection process is where every implementation either succeeds or fails, long before the first login.

SaaS tools do not fail small businesses. The process of choosing them does.

The pattern is consistent enough and expensive enough that it is worth treating as a strategic problem rather than a series of individual unfortunate decisions. Each failed implementation is not bad luck. It is a signal that the evaluation framework the questions asked before the purchase, the filters applied before the trial, the adoption plan built before the rollout was not structured enough to produce a different result.

Getting that framework right does not require technical expertise or a large budget. It requires a different set of questions asked in a different order starting with the business and ending with the tool rather than the other way around.

Once that shift happens the cycle breaks. And the time and money that were disappearing into abandoned subscriptions start going somewhere more useful.

The next step in building that framework is understanding exactly what a structured pre-purchase evaluation looks like in practice the specific filters that separate tools worth trying from tools worth skipping, and the questions that surface integration gaps and adoption risks before they cost anything. That process is what how to evaluate SaaS tools before you buy so you stop making expensive guesses breaks down in full.

Did you find this helpful?

Your feedback helps us curate better content for the community.