There is a version of running a small business where the tools you use actually make the work easier. Where your team knows where everything lives. Where data moves between systems without anyone manually copying it. Where the monthly subscription total represents genuine operational value rather than a collection of good intentions that never quite delivered.

Most small business owners have not built that version yet. Not because the right tools do not exist they do but because the process of choosing SaaS tools for small business is broken in ways that are rarely acknowledged openly.

The average early-stage founder makes SaaS decisions reactively. A problem surfaces. Someone recommends a tool. The free trial looks promising. The subscription starts. Three months later the tool is underused, the problem is partially solved and two new problems have been created at the integration points where the new tool meets the existing stack. Multiply that cycle across five or six tools and you have a stack that costs more in time and money than it saves.

This guide is about breaking that cycle. Not by recommending specific tools the right tools depend entirely on how your business actually operates but by building the decision framework that makes every SaaS choice more deliberate and more likely to deliver lasting value.

The framework covers five areas. Why most small businesses choose the wrong tools in the first place and what the real failure point is. How to evaluate SaaS tools before spending anything through a structured pre-purchase process. How to build a coherent stack where tools work together rather than against each other. How to implement a new tool in a way that produces genuine team adoption. And the specific mistakes that derail even well-chosen tools over time.

Each of those areas is a place where small businesses consistently lose time and money that a better approach would have protected.

why most small businesses choose the wrong SaaS tools and pay for it twice

The failure point in most small business SaaS decisions is not the tool. It is the evaluation process that led to choosing it and specifically the absence of a structured evaluation process at all.

Most founders pick software the way they pick restaurants when they are already hungry. Quickly, based on surface impressions, without nearly enough information about whether the option in front of them fits what they actually need. A recommendation in a founder community. An ad that arrived at the right moment. A Product Hunt feature that made the tool sound purpose-built for exactly their situation.

None of those starting points are inherently wrong. The problem is that most founders move directly from discovery to sign-up without stopping to ask whether the tool fits how their business actually operates today not how they want it to operate but how it genuinely functions right now.

That gap between surface appeal and operational fit is where most SaaS tool failures begin. And the failure rarely announces itself clearly. It unfolds quietly over three to four weeks.

Week one feels promising. The tool is new, the interface is interesting and the motivation to explore it is high. Week two is where cracks appear the daily habit of using the tool has not formed and team members who were not involved in the decision do not feel ownership over the new system. Week three is quiet. Nobody says the tool is not working. They find workarounds. Tasks migrate back to email. By week four the subscription is active and the tool is functionally abandoned.

The subscription fee is the visible cost of that failure. It is also the smallest cost. The real cost includes the time spent evaluating the tool before purchase, configuring it, attempting to drive adoption and eventually making the decision to move on and start the cycle again. For a founder billing at a professional rate that cycle absorbs thousands of dollars in opportunity cost before a single feature delivers any value.

Three patterns drive this outcome consistently across different business types and different tool categories.

The first is choosing for features rather than workflow fit. A tool with capabilities the business will never use is not more valuable than a simpler tool used every day. Unused features are not neutral they are friction that compounds until the interface feels overwhelming and people stop using it.

The second is skipping the integration audit. Every new tool enters a stack of existing systems. If it cannot communicate cleanly with the tools already in use data gets duplicated, workflows break at handoff points and team members develop manual workarounds that eventually become the actual system.

The third is underestimating the adoption gap. A founder understands why a tool was chosen. The team does not because they were not part of the decision. They receive an announcement and are expected to change their daily habits from Monday. That expectation almost always produces exactly the four-week failure sequence described above.

Understanding why SaaS tools fail small businesses and what the real cause of those failures looks like in practice is the clearest starting point for building a better approach because the problem is structural rather than incidental and the fix has to happen at the evaluation stage rather than after the purchase.

how to evaluate SaaS tools before you buy the framework that saves you months

The free trial is the SaaS industry’s answer to the evaluation problem. Try before you buy. Spend two weeks inside the platform and decide whether it works for your business.

In theory that is reasonable. In practice free trials are almost always evaluated under conditions that bear no resemblance to real operational use. The founder explores the interface during a window of high motivation. Sample projects get created. Features get clicked through. Everything feels promising because nothing is under real pressure yet.

Real operational use looks different. Deadlines arrive. Team members need onboarding without anyone having bandwidth to train them. A process that worked smoothly with one sample project breaks down when five real projects are running simultaneously with actual clients waiting on deliverables.

The free trial tells you what a tool can do. It does not tell you whether the tool fits how your business actually operates under normal conditions. Getting that answer requires a structured evaluation process that starts before the trial begins and shapes how the trial gets used.

Four filters applied before signing up for any free trial will eliminate most tools that look appealing on the surface but would fail in your specific operational context.

Workflow fit is the first filter and the one that eliminates the most candidates fastest. Before evaluating any tool map how work actually moves through your business today. Where does work originate? How does it get assigned? Where do handoffs happen between team members? Where does it most often stall or fall through the cracks? Then evaluate any potential tool directly against that map. A tool built around sprint cycles does not serve a business running continuous client retainers well regardless of its feature count. Workflow fit determines whether the tool works with the business or against it at every step of daily use.

Team adoption potential is the filter most founders skip entirely. Every tool you adopt will be used or not used by real people with real habits and genuine resistance to behavioral change. The question is not whether your team is capable of learning new software. Most people are. The question is whether the tool asks enough of them to produce resistance during the adoption phase and whether the people who will use it daily were involved in identifying the problem it is supposed to solve. A team member who participated in defining the problem is significantly more likely to adopt the solution than one who receives an announcement on a Monday morning.

Integration compatibility requires an audit before any purchase. Open a list of every tool your business currently depends on for daily operations email, calendar, CRM, accounting software, communication platform, file storage. Check the new tool’s actual integration documentation against that list. Not the marketing page claiming thousands of integrations the technical documentation that describes what data syncs, in which direction and how frequently. A shallow integration that syncs in one direction on a 24-hour delay is not the same as a native two-way connection that updates in real time. The difference matters enormously in daily use and discovering it after the subscription starts is far more expensive than discovering it before.

True cost of ownership extends well beyond the subscription fee. Migration cost the time required to move existing data and workflows into the new system. Setup cost the configuration time needed to make the tool reflect how the business actually operates. Training cost the time required to bring team members to a functional level of proficiency. Ongoing maintenance keeping the system current as the business evolves and onboarding new team members as the team grows. For a small business where time has a direct dollar value these costs are not abstractions. A tool with a lower subscription fee and a higher total implementation cost is not actually the cheaper option.

Once the four filters have narrowed the shortlist to two or three genuine candidates the trial becomes a structured test rather than an open exploration. Move real work into the tool actual current projects with actual team members assigned. At the end of the first week ask two questions. Does the tool make the work feel clearer or more complicated than before? And can the person you involved in the trial find what they need without asking you where it is?

The final evaluation question is the most revealing one. If this tool disappeared tomorrow and the team had to go back to whatever they were using before would anyone be frustrated by that loss? Not the founder. The team members who used it during the trial. A tool that would not be missed did not fit closely enough to create genuine operational dependency. A tool that would be missed has earned its place in the stack.

A complete breakdown of how to build a structured pre-purchase evaluation process that eliminates the most common selection mistakes before they cost anything covers each of these filters in full — including the specific questions that surface integration gaps and adoption risks during the evaluation phase rather than after.

how to build a SaaS stack for your small business without overspending

Most small businesses do not have a tool problem. They have a stack problem.

The distinction is important. Individual tools get chosen for good reasons a recommendation from a trusted source, a free trial that demonstrated clear value, a specific problem that needed solving at a specific moment. Six months later the business is running on eight subscriptions that do not communicate with each other, create duplicate data entry at every workflow handoff and cost more combined than a single well-integrated solution would have.

Each tool was a reasonable individual decision. None of them were chosen as part of a system. That is the stack problem.

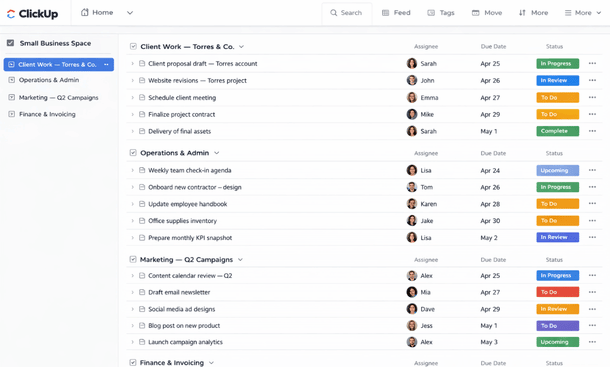

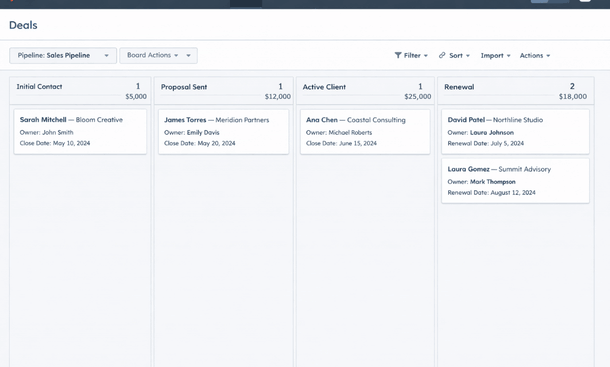

The practical difference between a tool collection and a coherent stack shows up at every handoff point in the workflow. A new lead arrives through the website. In a tool collection that lead gets entered into a contact form, manually copied into a CRM, referenced in a separate project management tool when the sales process advances and invoiced through a platform that has no connection to any of the above. Four data entry points for one event. Four opportunities for human error. Four systems holding slightly different versions of the same information.

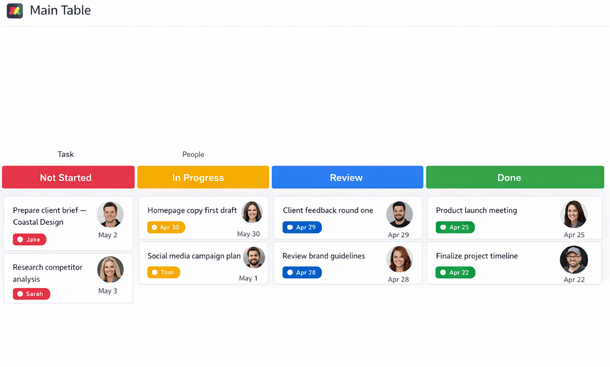

In a coherent stack that lead flows automatically from the intake form into the CRM, triggers a task in the project management tool and notifies the right team member through the communication platform without manual intervention at any step. One event, one data entry point, consistent information across every system that needs it.

Every small business regardless of industry or growth stage has operational needs that fall into five core categories. The goal is one strong tool per category that integrates cleanly with the others.

Project and task management is the operational center of the stack. Teamwork and Paymo serve service-based operations particularly well. ClickUp works for businesses wanting maximum flexibility at the cost of setup investment. Monday.com serves teams prioritizing visual workflow clarity and client-facing collaboration.

Communication is the category where most small businesses already have something in place. The relevant question is whether the current tool integrates cleanly enough with the project management layer to be genuinely useful rather than a source of disconnected notification noise.

CRM and client management is consistently the most underinvested category in small business stacks. HubSpot’s free tier covers the core needs of most small service businesses. Notion configured as a client database works well for very small operations managing fewer than ten active client relationships.

Finance and invoicing works best when a single platform handles at least two of the three core functions invoicing, expense tracking and accounting natively. QuickBooks Online covers accounting and invoicing with appropriate depth for most small businesses. Paymo handles invoicing and project-based time tracking in a single workflow for service businesses billing by the hour.

Marketing and lead generation is where early stage businesses most commonly over-invest. Start with what the business needs today. Mailchimp or ConvertKit covers the core requirements of most small businesses at a price point that does not require a revenue milestone to justify.

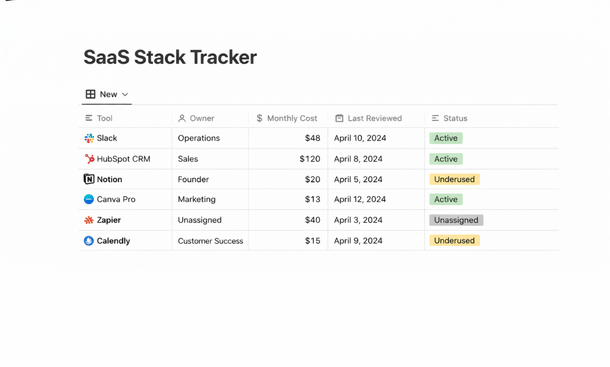

A stack audit answers four questions in two hours. Which tools are being used consistently? Which integrations are broken or missing? Which tools are being paid for at a tier that no longer matches actual usage? And which tools could be consolidated without meaningful operational loss?

Understanding how to build a SaaS stack where every tool was chosen with the full system in mind and how to audit the one you already have against that standard is what separates a collection of subscriptions from an operational infrastructure that compounds value over time.

how to implement a new SaaS tool without losing your team in the process

Choosing the right tool is one decision. Getting a team to genuinely change how they work around it is a completely different challenge and it is the one that determines whether the investment delivers anything at all.

The hardest part of implementing a new SaaS tool is not the configuration. It is the week after the configuration when the tool is technically ready and the team is technically informed and nothing has actually changed.

Three assumptions break most small business SaaS rollouts. The assumption that announcing a tool is the same as implementing it. The assumption that capability equals motivation. And the assumption that problems will surface themselves when they almost never do team members find workarounds and maintain parallel systems rather than flagging friction directly.

Two conversations held before the rollout begins change the outcome more reliably than any onboarding documentation.

The first is the problem conversation a direct discussion with team members about the specific operational problems the tool is meant to solve from their perspective. When team members understand the problem the tool is solving from their own experience rather than the founder’s perspective the adoption equation shifts. That conversation takes 20 minutes and produces adoption dividends that no post-launch training can replicate.

The second is the input conversation bringing at least one team member into the setup decisions before the configuration is finalized. People use systems they helped shape at a significantly higher rate than systems handed to them fully formed.

The rollout sequence that produces lasting adoption follows four phases. Week one the founder uses the tool with real work before asking anyone else to. Week two a structured 30-minute team walkthrough covers the three or four core actions the team will perform most often not every feature, just the daily essentials. Weeks three and four a direct friction audit surfaces the specific problems creating resistance and targeted fixes address them before they compound into reasons to abandon the system.

Three lightweight metrics tracked consistently tell you where the implementation stands. Daily active users how many team members logged in at least once per day during the previous week. Task creation rate whether tasks are being created at a volume reflecting how much work is actually happening. And clarification messages how many times per week team members ask questions the tool should already be answering.

The complete guide to implementing a new SaaS tool in a way that produces genuine team adoption rather than nominal compliance walks through each phase of this sequence in detail including how to handle the period between week two and week six when the system is real but the habit is not yet stable.

SaaS tool mistakes small business owners make and how to avoid every one of them

Most SaaS mistakes do not feel like mistakes when they happen. They feel like reasonable decisions made with the information available at the time. The problem is that the most expensive ones are structural patterns that produce predictable bad outcomes regardless of which specific tools are involved.

Seven mistakes show up consistently across small business SaaS stacks.

Buying for the business you want instead of the business you have. A tool chosen for anticipated future scale creates overhead now and may never deliver the value that justified it if growth does not materialize on schedule. Before any purchase does this tool solve a problem the business has today or one it might have in a year?

The over-tooled stack. Each individual addition made sense at the time and nobody audited the stack as a whole. Over-tooling is not just a budget problem it is a cognitive load problem where team members spend as much time deciding which tool to use as actually doing the work.

Ignoring the integration layer until it is too late. Integration failures discovered after the purchase are the most expensive time to discover them. A pre-purchase integration audit takes less than an hour and can save dozens of hours over the following year.

Treating the free plan as a long-term strategy. Free plans are deliberately limited in ways that become increasingly constraining as the business scales. Staying on a free plan past the point where its limitations create genuine friction costs more in workaround time than the upgrade it avoids.

No owner, no accountability. Every tool in the stack needs one named person responsible for keeping the configuration current and flagging when it is not delivering value. Without a designated owner tools drift until someone finally acknowledges that nothing has been working properly for a long time.

Switching tools instead of fixing the implementation. General feedback it feels complicated, nobody remembers to update it is almost always an implementation problem that a new tool will not resolve. Switching resets the entire implementation clock while leaving the root cause intact.

Never auditing the stack. A quarterly audit of two hours answers four questions: which tools are being used consistently, which integrations are broken, which tiers no longer match actual usage and which tools could be consolidated. The cost of not doing it is visible on the credit card statement every month.

The full breakdown of the specific SaaS mistakes that derail even well-chosen tools over time and the habits that prevent each one covers every pattern above in detail with concrete prevention strategies for each.

There is a version of running a small business where the tools you use actually make the work easier. Where your team knows where everything lives, where data moves between systems without anyone manually copying it and where the monthly subscription total represents genuine operational value rather than a collection of good intentions that never quite delivered on their promise.

That version is not the result of choosing the most popular tools or the most feature-rich platforms. It is the result of making deliberate decisions about which tools to evaluate, how to evaluate them, how to build them into a coherent system and how to implement them in a way that produces lasting behavioral change rather than a two-week experiment that quietly fades.

The framework across every section of this guide points to the same conclusion. The problem in most small business SaaS stacks is not the tools themselves. It is the process or the absence of one that surrounds every tool decision from initial evaluation through long-term maintenance.

Getting that process right does not require technical expertise or a large budget. It requires four filters applied before any free trial begins. An honest audit of how work currently moves through the business before any tool enters the conversation. An integration check completed before the subscription starts. An implementation plan built before the rollout happens. Ownership assigned before the configuration drifts. And a quarterly audit scheduled before the next crisis forces the review that should have been routine.

Each of those habits costs time upfront. Each of them saves significantly more time downstream than they consume. And together they produce something that most small business owners spend years trying to build through trial and error a tool stack that compounds operational value over time rather than silently draining it through abandoned subscriptions, broken integrations and implementations that never fully stuck.

The cycle that most small businesses are caught in reactive decisions, failed adoptions, switched tools, repeated mistakes is not inevitable. It is predictable. And predictable cycles can be broken once the pattern is visible and the alternative is structured enough to follow.

The most immediately useful place to start is not with a new tool evaluation. It is with an honest look at why the previous ones failed because that diagnosis is what determines whether the next decision produces a different outcome or repeats the same pattern in a new interface.

That diagnosis and the structural reasons behind it are laid out in full in why SaaS tools fail small businesses and what the real cause of those failures looks like in practice the clearest starting point for building a SaaS decision process that finally produces results worth the investment.

Did you find this helpful?

Your feedback helps us curate better content for the community.